Topic 2: Asynchronous scheduling and exascale workflows

To reduce turnaround times of ESM simulations on future exascale HPC systems and to allow for novel ESM applications, future ESM codes need to enhance flexibility and portability. This will be especially important for the simulation of extreme events with very high spatial and temporal resolutions and possibly including chemical and aerosol weather.

Besides further theoretical development of the asynchronous scheduling concept and demonstrations based on ESM dwarfs from Topic 1, specific work in this topic focuses on developing on-line diagnostic tools to be run independently from the actual ESM code and which can later be used to alter the course of a simulation during its execution.

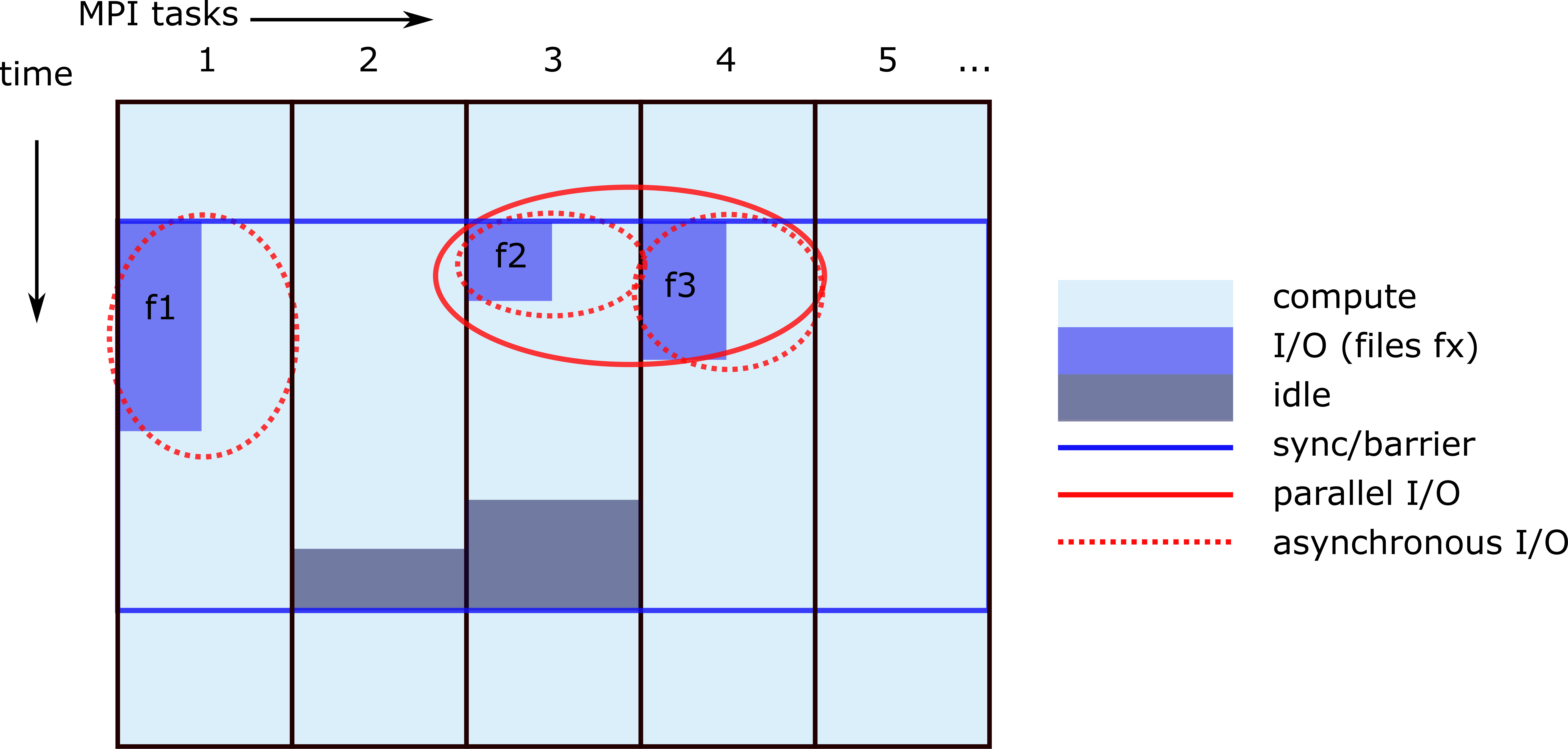

Asynchronous model I/O

Serial writing and reading of data (I/O) can cause a significant bottleneck in high-resolution model simulations.

Asynchronous scheduling is a well-established software paradigm, which is however rarely applied in ESM codes, largely due to legacy reasons. The asynchronous ESM concept might eventually allow for entirely new scientific workflows where model components are dynamically added to or removed from the execution chain based on scientific or technical online diagnostics tools. As a first step to enable asynchronous scheduling, ESM code bases need to be adapted to use parallel and asynchronous I/O.

(Other) Exascale Workflows

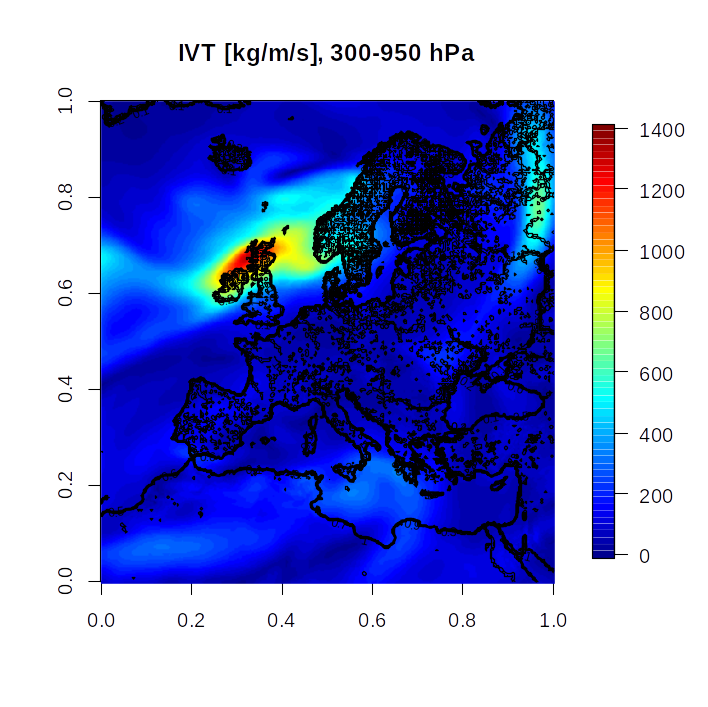

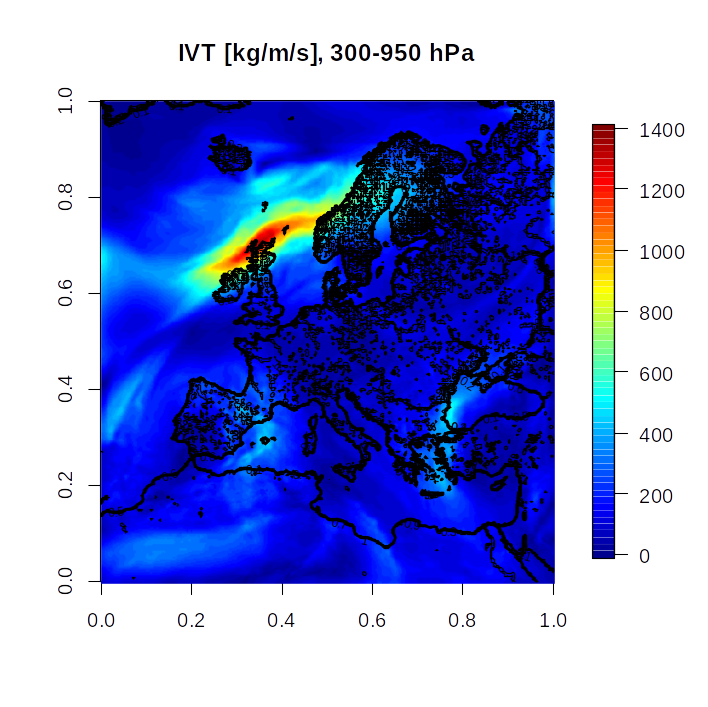

In addition to the organization of writing output, further steps can be taken during and after the model simulation to optimize exascale workflows. Calculation of on-line diagnostics during a simulation can cover different aspects of the hydrological cycle, atmospheric transport, and chemistry. This includes in particular the optimization of nested regional downscaling workflows and diagnostics based on Lagrangian transport.

One example are adaptive mesh refinements (AMR). They can reduce the I/O data volume by coarsening the grid in regions of weak gradients and can e.g. be applied to fields of chemical tracers.